“Is it fair?” This question is so emotionally loaded and generally so subjective that we need to start with a good, hard look at what it means in the context of language assessment. This is how H.L. Banerjee defines it in her paper Test Fairness in Second Language Assessment:

“Fairness, an essential quality of a test, has been broadly defined as equitable treatment of all test takers during the testing process, absence of measurement bias, equitable access to the constructs being measured, and justifiable validity of test score interpretation for the intended purpose(s).”

I want to focus on the first of these in the context of Clarity’s Dynamic Placement Test.

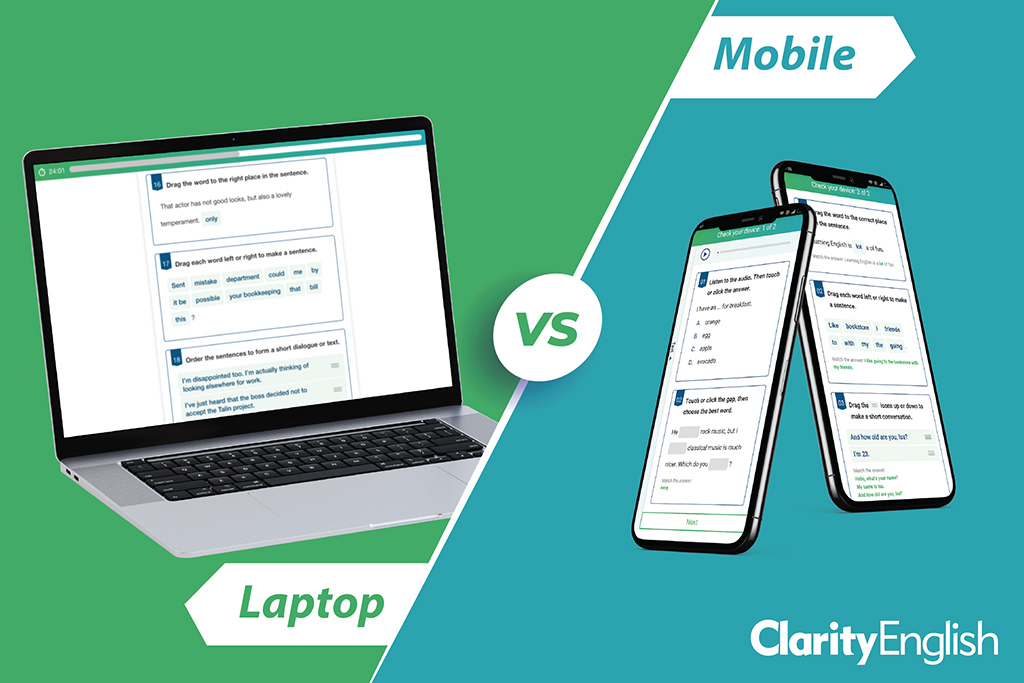

In considering equitable treatment of test takers we need to look at a number of factors that could have an impact on the result, and to collect data to see if they really do. These factors include cultural fairness (see my colleague Andrew’s video on that here), operational fairness (how easily can the test be subverted?) and device-related fairness. Will you get the same result if you take the same test on a desktop computer and a mobile phone?

In order to test this, we need to make some assumptions. We will assume that if it is on a phone, then it is the student’s own phone and that they are therefore familiar with it. We further assume that the the phone is being used in a school environment. If it is on a computer, then it is in a school lab. That means that we are not measuring students who are using mobiles or computers at home (to exclude additional variables in the environment). We take the standard to be a desktop computer and we hope to prove that results from the phone are the same.

We anticipate a number of issues. Here are just three:

- Reliability. With a phone, these include running out of battery, cracked screens and students forgetting to bring earphones. With a desktop, it may be that poorly maintained computers freeze, or don’t play audio.

- User interface. An obvious example is that a desktop has more space to show a reading text and you can therefore have the text and questions on the same screen. There may also be age-related factors, with younger test takers more familiar with the phone, and older students more comfortable with mouse and keyboard.

- Security. It is relatively easy for an invigilator to wander round a lab keeping an eye on computer screens. It is much more difficult to do this in a lecture theatre where students are using their mobiles.

So, where are we with measuring device fairness for the Dynamic Placement Test? Before isolating any of the three factors described above, we are collecting data to establish if there is any variation in results in the first place. We have data from quite a large number of homogeneous test groups using the same device, for example Mexican first-year university students on computers, and Indonesian teachers of English in a lecture theatre on phone. But we have not yet been able to access enough heterogeneous groups using the same device in a controlled environment, or homogeneous groups using different devices. We do not want to set up “artificial” trials, so we need to wait for these setups to arrive from authentic test-taking situations.

Here are a few initial (and encouraging) findings:

- 38% of tests are taken on a mobile device and 62% on desktop. On both mobile and desktop, a small number of test takers try the familiarisation section more than once. Of those that do, 39% are on mobiles and 61% on desktop. This suggests that choice of device results in no significant difference in the ability to learn item type functionality.

- Tests taken on a mobile device take, on average, just 15 seconds less than on a computer (not a significant difference).

- One A2 reading item was presented 153 times in 1,111 tests: 70 times on mobile and 83 times on browser. Mobile test takers took an average of 184 seconds to answer it. Browser test takers took an average of 188 seconds to answer it. Again, no significant difference.

So far as we have been able to tell from item analysis on the data we do have, choice of device does not introduce a bias. If we later discover that in certain areas the device can influence the outcome, it will be interesting to devise tests and remedies to identify and eliminate the causes of that bias.