‘We like your online placement test,’ said the teacher at Taiwan’s Asia University, ‘but with 1,000 freshers and only 20 computers, we’d be halfway through the first semester before we could even sort out our classes.’

Placement tests are a chore. In most schools they are done on paper, and for an institution like Asia University this means a team of teachers marking 1,000 papers — a boring and demoralising task at the start of the year. And expensive. It’s not just the cost of the tests, it’s also the teachers’ time and the admin work when the papers have been graded.

Clarity’s objective in designing a new assessment model was to eliminate the teachers’ work and minimise admin and cost. We discovered that there are three main challenges, all of which needed to be met to develop an effective online placement test.

1. Hardware

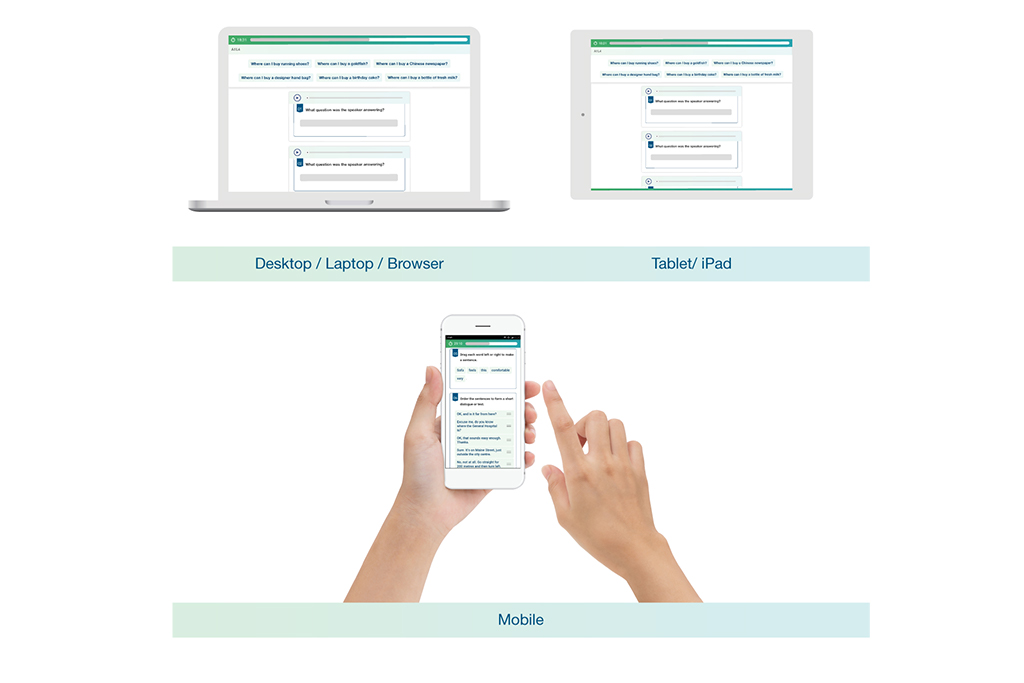

It didn’t take long to realise that every student in the Asia University lecture theatre had a powerful device sitting right in front of them: their phone. And a moment’s reflection tells us that a smartphone is not only convenient; it’s an ideal tool for a basic language test. As Dr Paul Booth of DePaul University, Chicago points out, ‘there has been a shift in the way we communicate; rather than face-to-face interaction, we’re tending to prefer mediated communication. We’d rather email than meet; we’d rather text than talk.’ And for our daily interactions, the tool of choice is now the smartphone. The first challenge, then, was to develop a test that runs not just on tablets and desktops, but on smartphones too.

2. Question types

The second challenge was to ensure that test items go beyond the usual dreary multiple choice and gap fill questions. Sean McDonald, the designer of Clarity’s new Dynamic Placement Test, was adamant that it should not be a paper test digitised for online delivery, but should be designed to exploit the capabilities of the medium. This meant building paths for test takers based on real time performance, including a range of audio items, and using question types that simply do not work on paper.

An example is rearranging words to form a sentence. This question type looks at words, patterns, and structures and therefore tests students’ understanding of how the English language works. It takes a broader, more lexical approach to testing instead of looking at isolated vocabulary or grammar points.

3. Test items

How difficult can it be to write the content for a placement test? Clarity’s first version drew on a bank of questions written by teachers, focusing on grammar, vocabulary and listening. But it didn’t produce consistent outcomes. Balancing the objective items with subjective ‘can do’ statements seemed to work — but actually, were we just asking test takers to tell us their level? What Clarity needed was testing professionals, and we were very lucky to team up with telc, a subsidiary of the German Adult Education Association, which has been creating international language tests for more than 45 years.

It took more than five years to meet and overcome these challenges, and Clarity and telc launched the Dynamic Placement Test in February 2017. The next, and perhaps the most exciting goal is to build up a self-supporting community of test users. This means using data from real test takers to accommodate cultural or first-language influences on responses, making the Dynamic Placement Test ever more appropriate and accurate.