The HOPES-LEB project aims to improve the chances for a better future for young people in Lebanon. Here’s how they do it.

The HOPES-LEB project aims to improve the chances for a better future for young people in Lebanon. Here’s how they do it.

A placement test score should be a fair representation of a candidate’s ability, not their cultural knowledge. Here are four steps test designers can take to ensure that.

Whether you are recruiting 60 or 600 candidates, answer these three questions to choose the right placement test for your company’s needs.

Running placement tests for a large cohort of new students can be time-consuming, costly and tedious. Dr Adrian Raper’s five-question framework makes it totally efficient. Test it here.

Online proctoring can help make remote assessment programmes more accessible and flexible. But what should you look for in a provider?

At JALT 2018, Matthias Prikoszovits (MP) from Universität Wien tells Sieon Lau (SL) about the importance of providing vocational experience, cultural knowledge and help on understanding legal language.

This case study describes the role of the Dynamic Placement Test in an international project to help young Syrian refugees enter higher education.

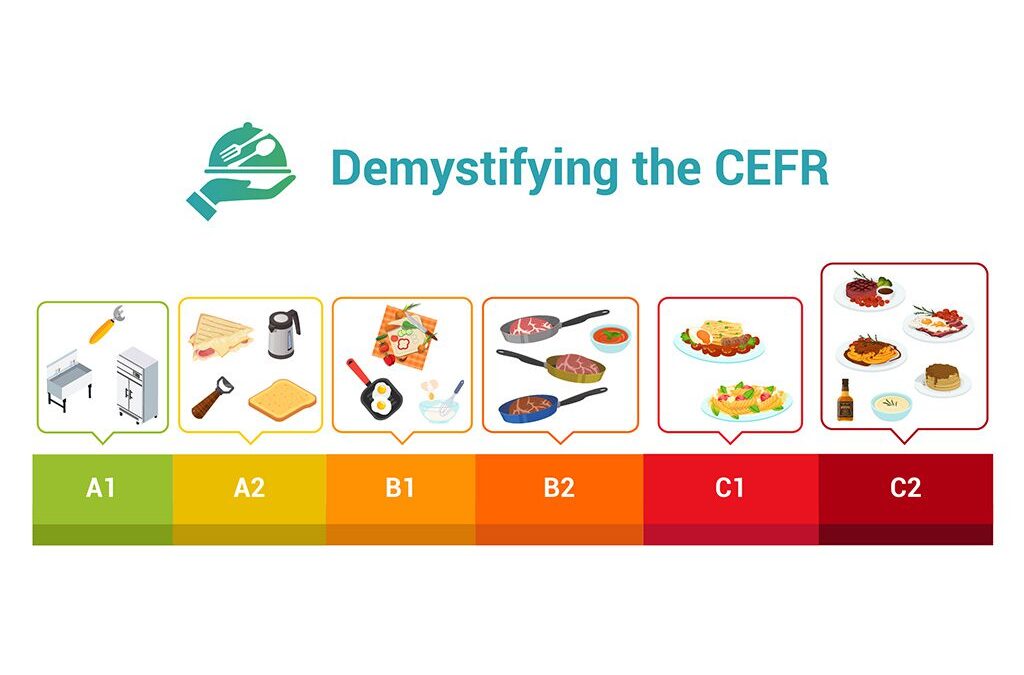

Andrew Stokes presents an ingenious technique for demystifying the CEFR. The idea, devised by Sean MacDonald of telc, is to compare it to cooking.

In this post, Andrew Stokes looks at the first two stages of matching test items to the CEFR – with an accompanying webinar clip and report.

Language ability in academic versus real life settings can differ greatly. That’s why the CEFR is the perfect tool to measure what students really ‘can do’.